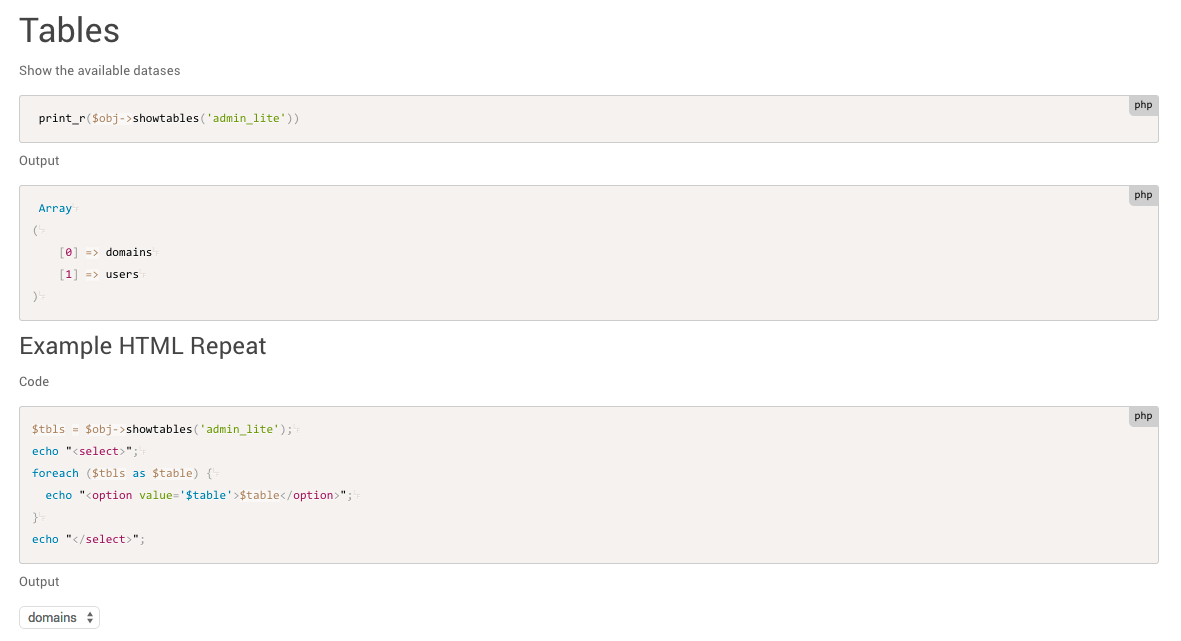

UdaExec provides DevOps support features such as configuration and logging.We can extract table from SQL database (SQL Server / Teradata). Print(result.keys()) # let's check what objects we gotĭf1 = result # extract the pandas data frame for object df1 Similarly, you can read. Result = pyreadr.read_r('C:/Users/sampledata.RData') With the use of read_r( ) function, we can import R data format files. You can install this package using the command below. Rds format files which in general contains R data frame. To get labels, set apply_value_formats as TRUEĭf, meta = pyreadstat.read_dta("cars.dta", apply_value_formats=True) Mydata41 = pd.read_stata('cars.dta') pyreadstat package lets you to pull value labels from stata files.ĭf, meta = pyreadstat.read_dta("cars.dta") We can load Stata data file via read_stata() function. To install this package, you can use the command pip install pyreadstatĭf, meta = pyreadstat.read_sas7bdat('cars.sas7bdat') It is equivalent to haven package in R which provides easy and fast way to read data from SAS, SPSS and Stata. If you have a large SAS File, you can try package named pyreadstat which is faster than pandas. We can import SAS data file by using read_sas() function. To include variable names, use the names= option like below. Mydata2 = pd.read_table("", sep="\s+", header = None) Suppose you need to import a file that is separated with white spaces. Mydata = pd.read_excel("",sheetname="Data 1", skiprows=2) If you do not specify name of sheet in sheetname= option, it would take by default first sheet. The read_excel() function can be used to import excel data into Python. Mydata = pd.read_csv("C:\\Users\\Deepanshu\\Desktop\\example2.txt", sep ="\t") 4. Mydata = pd.read_table("C:\\Users\\Deepanshu\\Desktop\\example2.txt") We can also use read_csv() with sep= "\t" to read data from tab-separated file. We can use read_table() function to pull data from text file. Simply put URL in read_csv() function (applicable only for CSV files stored in URL).

You don't need to perform additional steps to fetch data from URL. Keep up with my latest posts by following my blog on Twitter!.Detailed Explanation : Import CSV File in Python 2. In a future post, we’ll explore their additional functionality. That’s it for this post! There’s much more to turbodbc and pyodbc. Lastly, let’s fetch all of the rows using the familiar fetchall methowed. Now, using the same syntax as with pyodbc, we can execute our same SQL query.Ĭursor.execute("select * from sample_table ")

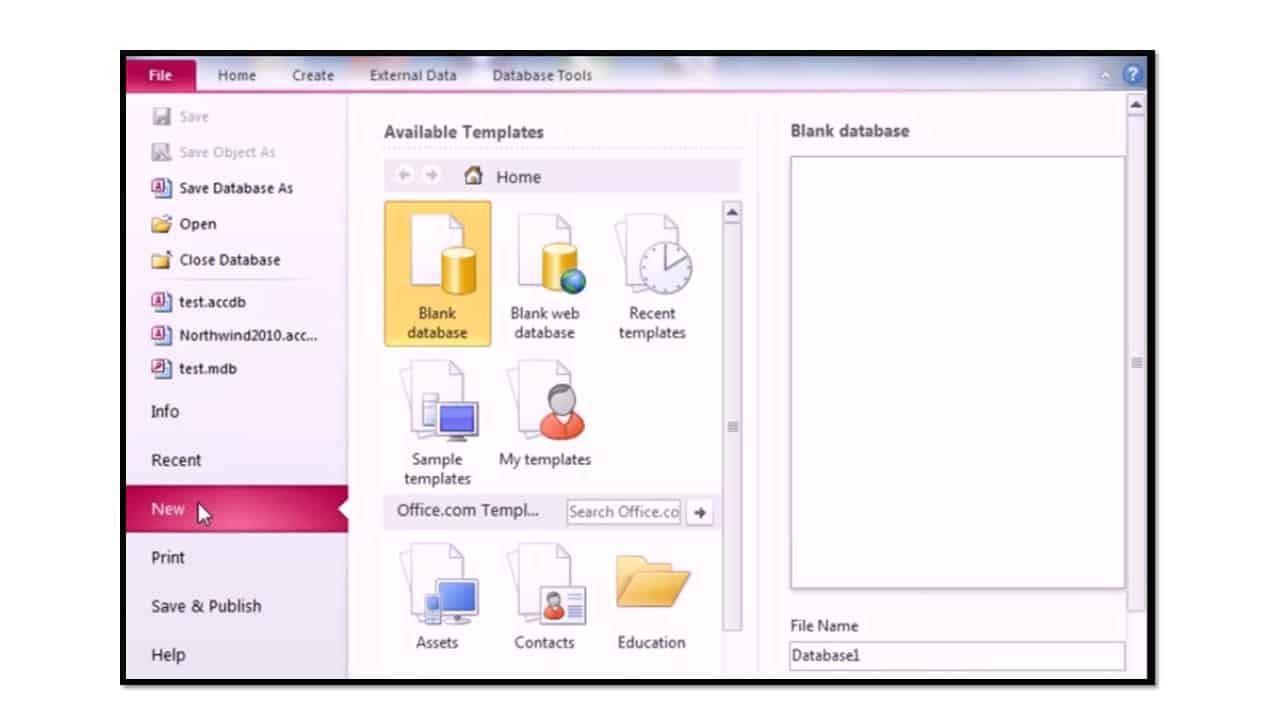

nnect(dsn = "SQLite3 Datasource")Īfter the connection is created, we can define a cursor object, like what we did earlier in this post. We can use either the connection string, like previously, or directly specify the DSN (“data source name”). Similar to the above examples, we can connect to our database using a one-liner command. If you don’t have conda, see here.Īfter that is done, the next step is to import turbodbc package. This will install all necessary dependencies across different platforms. It’s recommended to install turbodbc using conda install, like below. The use of buffers, together with NumPy on the backend, combines to make the data type conversions faster between the database and the Python results. For example, turbodbc uses buffers to speed up returning multiple rows. However, a primary advantage of turbodbc is that it is usually faster in extracting data than pyodbc. On the surface, these packages have similar syntax. Now that we’ve reviewed pyodbc, let’s talk about the turbodbc. Pypyodbc has a handful of methods that do not currently exist in pyodbc, such as the ability to more easily create Access database files. If you have a trusted connection setup, then you can specify that (like in the first example below).Ĭhannel = nnect("DRIVER= SERVER=localhost DATABASE=sample_database.db Trusted_connection=yes") one of the database systems mentioned), the server, database name, and potentially username / password. In the connection string, we specify the database driver (e.g. However, you can do this with many other database systems, as well, such as SQL Server, MySQL, Oracle, etc. In the examples laid out here, we will be using a SQLite database on my machine. To do that, we first need to connect to a specific database.

Let’s start by writing a simple SQL query using pyodbc. We’ll start by covering pyodbc, which is one of the more standard packages used for working with databases, but we’ll also cover a very useful module called turbodbc, which allows you to run SQL queries efficiently (and generally faster) within Python. This post will talk about several packages for working with databases using Python. Background – Reading from Databases with Python

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed